[ad_1]

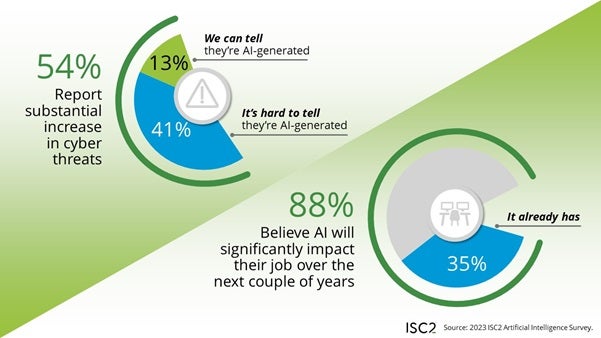

Most cybersecurity professionals (88%) believe AI will significantly impact their jobs, according to a new survey by the International Information System Security Certification Consortium; with only 35% of the respondents having already witnessed AI’s effects on their jobs (Figure A). The impact is not necessarily a positive or negative impact, but rather an indicator that cybersecurity pros expect their jobs to change. In addition, concerns have arisen about deepfakes, misinformation and social engineering attacks. The survey also covered policies, access and regulation.

How AI might affect cybersecurity pros’ tasks

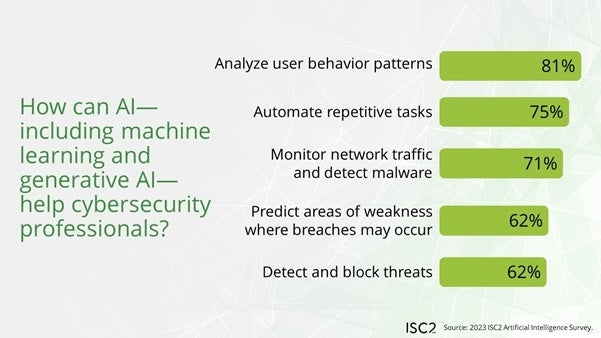

Survey respondents generally believe that AI will make cybersecurity jobs more efficient (82%) and will free up time for higher-value tasks by taking care of other tasks (56%). In particular, AI and machine learning could take over these aspects of cybersecurity jobs (Figure B):

- Analyzing user behavior patterns (81%).

- Automating repetitive tasks (75%).

- Monitoring network traffic and detecting malware (71%).

- Predicting where breaches might occur (62%).

- Detecting and blocking threats (62%).

The survey doesn’t necessarily rank a response of “AI will make some parts of my job obsolete” as negative; instead, it’s framed as an improvement in efficiency.

Top AI cybersecurity concerns and possible effects

In terms of cybersecurity attacks, the professionals surveyed were most concerned about:

- Deepfakes (76%).

- Disinformation campaigns (70%).

- Social engineering (64%).

- The current lack of regulation (59%).

- Ethical concerns (57%).

- Privacy invasion (55%).

- The risk of data poisoning, intentional or accidental (52%).

The community surveyed was conflicted on whether AI would be better for cyber attackers or defenders. When asked about the statement “AI and ML benefit cybersecurity professionals more than they do criminals,” 28% agreed, 37% disagreed and 32% were unsure.

Of the surveyed professionals, 13% said they were confident they could definitively link a rise in cyber threats over the last six months to AI; 41% said they couldn’t make a definitive connection between AI and the rise in threats. (Both of these statistics are subsets of the group of 54% who said they’ve seen a substantial increase in cyber threats over the last six months.)

SEE: The UK’s National Cyber Security Centre warned generative AI could increase the volume and impact of cyberattacks over the next two years – although it’s a little more complicated than that. (TechRepublic)

Threat actors could take advantage of generative AI in order to launch attacks at speeds and volumes not possible with even a large team of humans. However, it’s still unclear how generative AI has affected the threat landscape.

In flux: Implementation of AI policies and access to AI tools in businesses

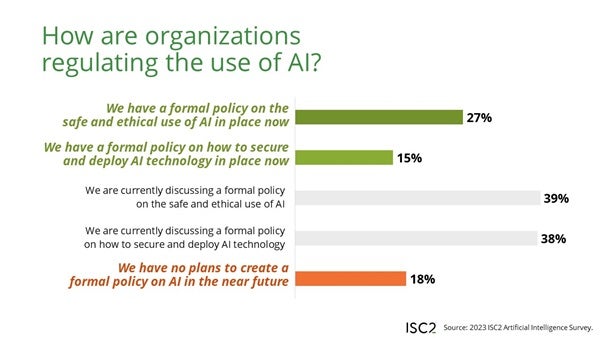

Only 27% of ISC2 survey respondents said their organizations have formal policies in place for safe and ethical use of AI; another 15% said their organizations have formal policies on how to secure and deploy AI technology (Figure C). Most organizations are still working on drafting an AI use policy of one kind or another:

- 39% of respondents’ companies are working on AI ethics policies.

- 38% of respondents’ companies are working on AI safe and secure deployment policies.

The survey found a very wide variety of approaches to allowing employees access to AI tools, including:

- My organization has blocked access to all generative AI tools (12%).

- My organization has blocked access to some generative AI tools (32%).

- My organization allows access to all generative AI tools (29%).

- My organization has not had internal discussions about allowing or disallowing generative AI tools (17%).

- I don’t know my organization’s approach to generative AI tools (10%).

The adoption of AI is still in flux and will surely change a lot more as the market grows, falls or stabilizes, and cybersecurity professionals may be at the forefront of awareness about generative AI issues in the workplace since it affects both the threats they respond to and the tools they use for work. A slim majority of cybersecurity professionals (60%) surveyed said they feel confident they could lead the rollout of AI in their organization.

“Cybersecurity professionals anticipate both the opportunities and challenges AI presents, and are concerned their organizations lack the expertise and awareness to introduce AI into their operations securely,” said ISC2 CEO Clar Rosso in a press release. “This creates a tremendous opportunity for cybersecurity professionals to lead, applying their expertise in secure technology and ensuring its safe and ethical use.”

How generative AI should be regulated

The ways in which generative AI is regulated will depend a lot on the interplay between government regulation and major tech organizations. Four out of five survey respondents said they “see a clear need for comprehensive and specific regulations” over generative AI. How that regulation may be done is a complicated matter: 72% of respondents agreed with the statement that different types of AI will need different regulations.

- 63% said regulation of AI should come from collaborative government efforts (ensuring standardization across borders).

- 54% said regulation of AI should come from national governments.

- 61% (polled in a separate question) would like to see AI experts come together to support the regulation effort.

- 28% favor private sector self-regulation.

- 3% want to retain the current unregulated environment.

ISC2’s methodology

The survey was distributed to an international group of 1,123 cybersecurity professionals who are ISC2 members between November and December 2023.

The definition of “AI” can sometimes be uncertain today. While the report uses the general terms “AI” and machine learning throughout, the subject matter is described as “public-facing large language models” like ChatGPT, Google Gemini or Meta’s Llama, usually known as generative AI.

[ad_2]